Why Healthcare AI Pilots Succeed and Production Deployments Don't

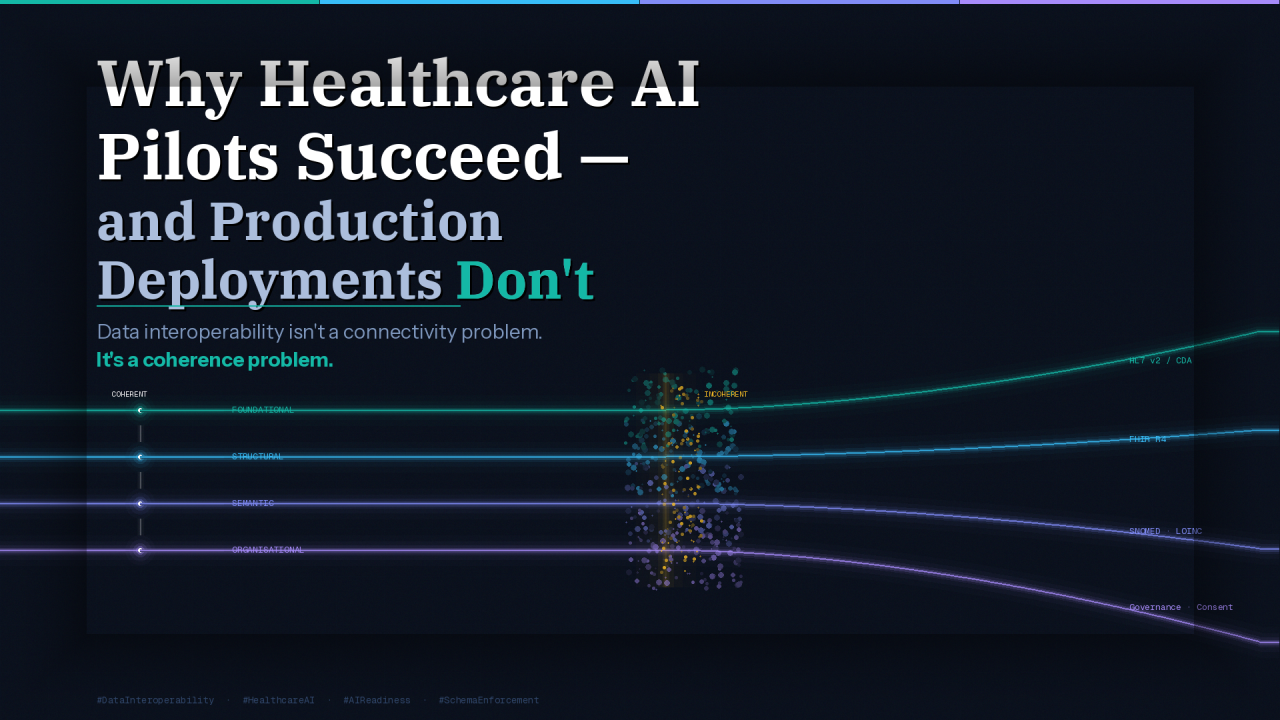

Data interoperability isn't a connectivity problem. It's a coherence problem.

Data interoperability isn't a connectivity problem. It's a coherence problem.

The reason most healthcare AI pilots succeed in controlled environments but fail at scale has nothing to do with the model. It has to do with what "connected data" actually means — and most organisations are solving the wrong layer.

Data interoperability has four levels: Foundational, Structural, Semantic, and Organisational. Most organisations confuse them, which is exactly why their AI initiatives stall before they can expand. You cannot build a trustworthy AI layer on top of an interoperability problem you haven't correctly diagnosed.

Most healthcare organisations have foundational and structural interoperability solved to varying degrees. But the most consequential and expensive problems reside at the semantic and organisational layers, which is precisely why so many AI pilots succeed in controlled environments but fail at scale. The data appears connected, but it isn't coherent. Incoherent data produces models that are confidently wrong, and in healthcare, that carries real consequences.

Semantic interoperability is where AI readiness lives or dies.

If "Type 2 Diabetes" is coded differently across your EHR, your lab system, and your claims data, your model isn't learning a clinical reality — it's learning inconsistency. It cannot generalise. It cannot be trusted in production. Semantic alignment is a prerequisite for any AI initiative worth funding.

A critical distinction that most organisations miss: FHIR alone does not resolve semantic interoperability. It structures the exchange, but it does not guarantee meaning. That requires terminology binding on top of it — SNOMED, LOINC, and RxNorm. A model ingesting FHIR-formatted data that isn't terminology-bound is still learning from noise. It just looks cleaner on the surface.

Beyond terminology, entity resolution becomes a model-integrity issue, not just a data-quality issue. If your platform cannot consistently answer "is this the same patient across all these records," your AI model is training on a fractured representation of reality. Duplicate records inflate population counts. Fragmented records create an incomplete patient context.

And here is the consequence most people never see coming: if certain populations have higher rates of fragmented records — which they do — your model develops a systematic blind spot for the populations requiring the most precise care. That is how algorithmic bias is quietly manufactured at the data layer, long before a model is ever deployed. It isn't an algorithmic failure. It's a data infrastructure failure that the algorithm faithfully reflects.

Organisational interoperability is the most underestimated factor across health systems. The hardest interoperability problems aren't technical — they're structural. Clinical departments protect their own data because they have no incentive to share it. Legal teams are risk-averse about exchange in the absence of clearly governed frameworks. Vendor contracts impose rigid data-sharing constraints that were never designed with AI in mind.

The result is an organisation where the data is technically accessible but practically siloed, and where every AI initiative triggers the same negotiation from scratch. The governance, consent, and trust frameworks that enable safe data exchange are what determine whether AI can be deployed ethically and at scale. Without consent management and audit trails built into the platform architecture, you cannot legally or responsibly use patient data to train models.

HIPAA governs data-sharing agreements and audit requirements for any system that touches PHI, including AI training pipelines. The 21st Century Cures Act prohibits information blocking and mandates open APIs — which means interoperability is increasingly a legal obligation, not a technical aspiration. ONC HTI-1 advances USCDI as the data element standard, directly shaping what a compliant, AI-ready data layer must include. Building to these requirements isn't overhead; it's the foundation your AI programme will eventually need, whether you planned for it or not.

The organisations that make real progress don't pursue full platform transformation from the outset. They build organisational confidence incrementally by prioritising high-value data domains first and demonstrating coherence before expanding scope.

Establishes the canonical data contract that AI pipelines can reliably consume. It is the starting point — not because FHIR solves everything, but because without it, everything else is custom and fragile.

Raw data in bronze for lineage. Normalised and terminology-bound records in silver. Curated, AI-ready datasets in gold. No model touches bronze. This separation is what makes data quality auditable and defensible.

Every training dataset must represent coherent, resolved entities. Without this, you are not training a model on patient data — you are training it on a probabilistic guess about what a patient's data looks like.

Access controls, audit logging, and consent management built into the platform — not bolted on later. This is what makes AI deployment legally defensible and regulatory audit-ready under HIPAA, the Cures Act, and emerging AI-specific frameworks.

Every pipeline draws from the same controlled vocabulary. This is the operational difference between a model that generalises across populations and one that doesn't — between AI that earns clinical trust and AI that quietly underperforms.

The goal is not perfect interoperability for its own sake. It is a data foundation that makes AI trustworthy, not just possible.

The organisations that will lead in healthcare AI are those whose data are coherent, governed, and semantically consistent enough to train on, audit, and defend in production.

Read the original on LinkedIn